Even if you don’t know Nick Bostrom’s name, you’re almost certainly familiar with the idea he’s most famous for.

Back in 2003, when he was at Oxford, Bostrom penned an influential philosophical paper with the incredible title of “Are You Living in a Computer Simulation?” Loosely speaking, his argument was that sufficiently advanced civilizations will eventually build sophisticated simulations of their own ancestors — and that, given enough time in the simulation, those simulated beings will develop their own simulation inside the simulation, where a new set of simulated ancestors will do the same thing, ad infinitum.

You probably get a sense where this is headed: with all these layers of simulated reality, Bostrom thinks that it’s very unlikely that us humans are actually living in the original “base” reality. Instead, we’re statistically probably in some tranche of an Escher-esque cosmic videogame.

Needless to say, the whole thing sparked decades of debate. Big names including Elon Musk have become proponents, while many other experts have argued against the idea.

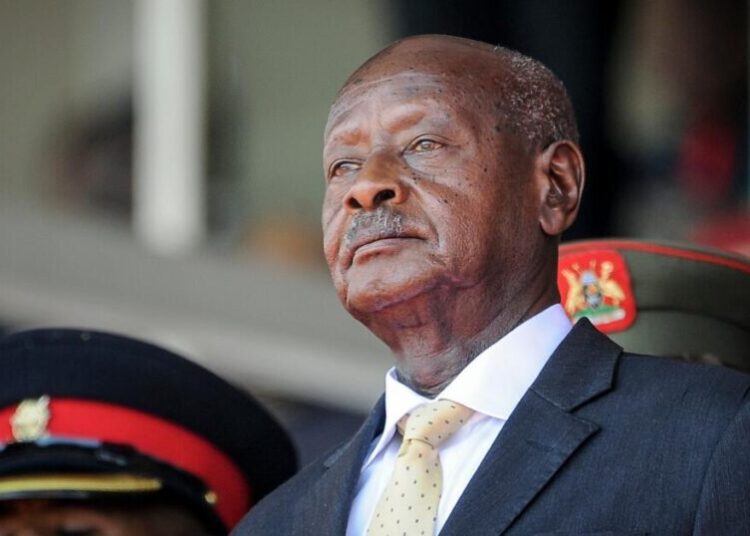

For his part, Bostrom has moved his attention to a new hot topic: artificial intelligence. For a while, he seemed to be moving in the direction of an AI doomer, issuing a grave warning in 2019 about how AI posed a greater risk to humankind than climate change.

Since then, though, he seems to be changing tack, albeit with his signature flare for ideas so outrageous that they almost sound like parody. In a new working paper, for instance, he argues that developing advanced AI may well result in the extinction of humankind — but that it’s worth the risk, because the upsides of superintelligence could be so profound.

“I call myself a fretful optimist,” Bostrom told Wired‘s Steven Levy in a new interview, deploying a term he’s used before. “I am very excited about the potential for radically improving human life and unlocking possibilities for our civilization. That’s consistent with the real possibility of things going wrong.”

“I guess I’ve been irked by some of the arguments made by doomers who say that if you build AI, you’re going to kill me and my children and how dare you,” he continued, taking aim at his fellow public intellectual Eliezer Yudkowsky. “Like the recent book ‘If Anyone Builds It, Everyone Dies.’ Even more probable is that if nobody builds it, everyone dies! That’s been the experience for the last several 100,000 years.”

Levy pushes back, pointing out that “in the doomer scenario everybody dies and there’s no more people being born. Big difference.”

“I have obviously been very concerned with that,” Bostrom retorted. “But in this paper, I’m looking at a different question, which is, what would be best for the currently existing human population like you and me and our families and the people in Bangladesh? It does seem like our life expectancy would go up if we develop AI, even if it is quite risky.”

These already head-scratching lines hit different when you remember that Bostrom believes it’s likely that we’re already living inside a computer simulation — in his head canon, do all those levels of simulated ancestors develop their own superintelligence, and what does that have to do with the new simulations they feel compelled to build? If AI wipes out humankind, does it build its own simulation? If so, is it simulating its human ancestors, or its creation by humankind? Heck, if our entire world is simulated, are we AI?

We’ll leave it up to readers to take another bong hit while they try to make sense of it all. Perhaps nobody could sum it up better than Levy, who ended the interview with a disclaimer that it had been “edited for length and coherence.”

More on AI: Professors Staffed a Fake Company Entirely With AI Agents, and You’ll Never Guess What Happened

The post Man Behind Simulation Hypothesis Warns That Extinction of Humanity Is a Risk We Have to Take appeared first on Futurism.