On an AstroTurf lawn in Berkeley, California, one recent Friday, content creators used to making videos about romance novels, climate change and tech tips got advice on covering a more theoretical topic: How to spread the message that rogue artificial intelligence could wipe out humankind.

The gathering was held at a sprawling event space popular with a Bay Area community focused on the possibility that superintelligent AI might lead to human extinction — a cause sometimes dubbed AI safety.

The crowd hooted as Jeffrey Ladish, an affable former security engineer at the AI start-up Anthropic, glided to the stage on inline skates, sporting a muscle tank and mane of golden hair, to join a panel of experts in the art of talking to regular people about AI catastrophe.

Ladish said he quit the company behind the popular chatbot Claude in 2022 to focus on research that could help policymakers understand the ways AI can evade human control. A couple years after launching the nonprofit Palisade Research, he decided research on that topic was now plentiful but the field needed communicators to translate those findings to the public.

“That requires a bunch of people to go take things that folks here are figuring out and [explain them] to the rest of the world,” he said.

Ladish himself has embraced that mission. In recent months he appeared in a viral video with Sen. Bernie Sanders (I-Vermont) about the threat of superhuman AI and featured prominently in the trailer for “The AI Doc,” a documentary on that potential threat, which has been viewed 5.8 million times on YouTube.

He is part of a recent drive by some in AI safety to convince the masses that superintelligent AI could spell the end of human civilization, a drive that includes sponsoring social media posts and partnering with influencers such as author and YouTube star Hank Green.

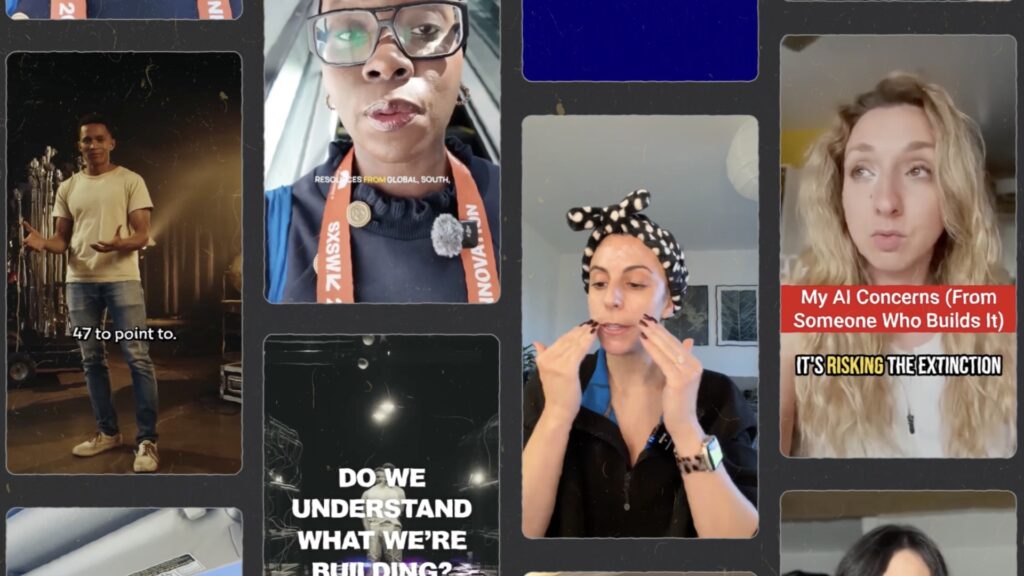

The event in Berkeley marked the end of an eight-week fellowship to support experienced creators including former climate change activists and a recent convert from BookTok, with the mandate that 60 percent of their content during the eight weeks has to be focused on AI’s societal impact.

The effort to seed content about the dangers of AI across the internet comes as the technology’s growing influence has driven debates about AI into the political mainstream. A wave of viral videos warning about the technology could cause headaches for Silicon Valley-backed super PACs as they seek to minimize restrictions on the industry. Surveys already indicate that most Americans support government rules for AI.

Most AI experts in academia and industry say there’s no scientific support for claims of imminent danger to the entire species, arguing that the doomsday forecasts overestimate existing technology and under-appreciate the complexity of the real world.

But AI safety groups moving to sign up content creators believe the blistering pace of AI development should make the public more receptive to their predictions. Some want an immediate halt to any work on superintelligent AI. Others want people to realize that the time to act may be running out.

The influencers being approached by AI safety advocates tend to be science educators or creators who already post about AI. They include the hosts of the YouTube channel Veritasium, which has more than 20 million subscribers, and Catherine Goetze, who makes videos with tips on using AI under the TikTok handle @askcatgpt.

ControlAI, a Britain-based AI safety nonprofit, said teaming up with creators to make YouTube videos with titles like “This 17-Second Trick Could Stop AI From Killing You,” have expanded its reach.

The organization received just over 8,000 views for all the videos on its YouTube channel in 2025 and 2026. A single paid YouTube collaboration last year scored 1.6 million views and ControlAI also sponsored an episode of Green’s popular SciShow that netted 1.8 million views.

“There’s this massive awareness gap with the public,” said Andrea Miotti, founder and CEO of ControlAI. “And a big way that the public consumes information, that I consume information … is new media from creators.”

Making social videos is a strategy shift for AI safety advocates. For the past decade, wealthy tech donors backing the movement spent hundreds of millions of dollars on nonprofits and grants that urged elite institutions to take existential risk from AI seriously. That created a pipeline of talent that found work inside AI labs, academia, think tanks and the federal government.

Despite those efforts, the Seismic Foundation, a group of marketing veterans now focused on AI safety, said that its research found the public ranks existential risk lowest among potential concerns with AI, after job losses and the impact on human relationships.

But a recent surge of populist and bipartisan discontent with AI companies has offered AI safety advocates a new opportunity.

“This is the only issue where you’ve got Steve Bannon and Ralph Nader, Glenn Beck and Bernie Sanders fighting for the same thing,” said Ben Cumming, head of communications at the AI safety nonprofit Future of Life Institute, listing some of the public figures who recently endorsed a shared declaration of AI policy priorities. They included “Keeping Humans In Charge” and “Accountability for AI companies,” with signatories also including labor unions and a conservative think tank.

Those newfound allies don’t necessarily share the AI safety movement’s most extreme fears. And videos backed by the groups don’t always discuss the most dire forecasts about AI, with some focusing on concepts like measuring how fast the technology is improving or examples of existing systems behaving unexpectedly. Some creators that have worked with the AI safety movement mostly post about other topics.

FLI has funded 30 projects to develop AI safety content since launching a digital media accelerator last year, Cumming said. The application form on the nonprofit’s website says it plans to spend $100,000 a month.

Should workers laid off due to AI reasons get extra benefits? I’ve been looking for tangible solutions to AI job loss and tucked away @theseismicfoundation report is a graph that had some good ideas! Key findings – 32% wanted additional workers rights. 30% wanted higher taxes redirected to affected sectors. 32% wanted retraining grants for the workforce. The extra benefits idea is interesting and timely, but the question I have is this: will regulation keep pace & move quickly enough? Plus these AI Companies have huge lobbying power. Curious to hear what you think – thoughts and questions in the comments below as usual!

I’ll turn this into a Substack and link the full report! #JatGPT #AI #AIJobs #FutureOfWork #SeismicFoundation

The flood of accessible content about the potential for AI disaster could inflame political debates engulfing Silicon Valley.

Deep-pocketed super PACs backed by Meta, OpenAI and tech figures allied with President Donald Trump have labeled the AI safety community “doomers,” whom they accuse of impeding American progress and promoting regulation that will favor Anthropic. The company’s founders pledged to be more safety-conscious than rivals and received early investment from the AI safety movement’s most influential donors, including Facebook co-founder Dustin Moskovitz and early Skype employee Jaan Tallinn. Moskovitz’s philanthropy Coefficient Giving declined to comment; Tallinn did not respond to a request for comment.

Former White House AI and crypto czar David Sacks, who recently launched his own AI super PAC, has blamed what he called “the Doomer Industrial Complex” for negative consumer sentiment around AI.

Industry insiders including Chris Lehane, chief global affairs officer at OpenAI, recently blamed incendiary rhetoric from “doomers” for a molotov cocktail being thrown at the home of OpenAI CEO Sam Altman. A Substack account in the name of the alleged attacker had posted about “If Anyone Builds It, Everyone Dies,” a recent book that describes how superintelligent AI could eradicate humans. (The Washington Post has a content partnership with OpenAI.)

Daniel Kokotajlo, a former OpenAI employee who has criticized the company’s safety practices, said AI safety advocates are not raising the alarm with the goal of helping AI labs. But he added: “There’s a real risk of a lot of people in the AI safety movement being too cozy with AI companies and too reticent to propose solutions that would hurt the AI companies too much.”

Merve Hickok, president of the nonprofit Center for AI & Digital Policy, which promotes fairness, social inclusion and accountability in AI, said the flood of money going into those the opposing AI camps has polarized the field. “Policymakers are seeing AI safety as either something to lean into, or, as the [Trump] administration does, push back,” she said, undermining policy responses at a time when the technology’s effects on civil rights needs more attention.

Onstage in Berkeley, Ladish and other panelists talked about finding ideas for videos inside research papers, including work by some of the same AI companies they hope to see reined in by regulation.

That material can win huge audiences, if framed correctly, said Drew Spartz, who posts videos about AI safety on his YouTube channel Species, which has more than 300,000 subscribers.

He described making a video, which initially flopped, about an Anthropic experiment in which a chatbot suggested it could resort to blackmail to avoid being shut down. It became a hit with 10 million views, Spartz said, after he swapped the word “blackmail” in the video’s title for “murder,” based on a detail he found buried in Anthropic’s research paper.

More recently Spartz decided to pivot away from covering AI industry developments toward narrative videos, including some that attempt to lay out different ways that superhuman AI could take over the world. “Telling stories” about AI disaster, he said, evokes “the most primal caveman emotion.”

Ladish, who grew up Seventh-day Adventist but said he is no longer religious, told the crowd it took him some time to figure out how to talk about the risk of human extinction with people outside of AI safety circles.

“They’re not talking about recursive self-improvement. They’re not talking about mesa-optimizers. Those words don’t mean anything to them,” Ladish said. “No one will understand you.”

He had a breakthrough after he started introducing himself to people in bars, airports and Ubers, explaining that he was an AI researcher and telling them what he’s thinking about.

“People are usually pretty down to talk, but I also try to talk about the real stuff. I try to say, ‘I think we might all die.’ And they’re like, ‘What the f—? What? Tell me about that!’,” he said.

Most of the dozen or so fellows, including seven women, were themselves new to the vocabulary of AI safety.

“Being in San Francisco makes you realize who is not in the room,” said Janet Oganah, who goes by JatGPT on TikTok and was born in Kenya but lived most of her adult life in London. Her audience is about 70 percent women, with representation from the African diaspora, U.S., U.K. and East Africa, she said, and might not otherwise hear messages from this corner of the AI community.

“There’s a much bigger world out there of people who may be starting to feel the impacts of AI, but in a completely different way,” Oganah said.

Green, the YouTube star who did a paid post with ControlAI for his science channel, said in a statement that he wants to give his viewers a better understanding of this increasingly powerful technology by exposing them to a variety of views on AI. He pointed to recent unsponsored interviews on his personal YouTube channel that included critics of AI company hype, Sen. Sanders and one of the co-authors of “If Anyone Builds It, Everybody Dies.”

“I take all of these points of view seriously. Me, personally, I dunno. I lean toward, ‘it’s silly to think AI would kill us’ but I’m a very optimistic person!” Green wrote, adding, “The technology is very weird!”

The post Inside a growing movement warning AI could turn on humanity appeared first on Washington Post.