“I prep for survival,” OpenAI’s Sam Altman confessed back in 2016. “I have guns, gold potassium iodide, antibiotics, batteries, water, gas masks from the Israeli Defense Force and a big patch of land in Big Sur I can fly to.”

For more than a decade now, Altman and his fellow founders have lived in a state of controlled anxiety about artificial intelligence. This is, in fact, the origin story for three of the five major players in the A.I. arms race, each of which was formed out of panic that the other players weren’t taking existential fears about the technology seriously enough. They seem to have worried less about the risk of political backlash from actual humans, assuming that it wouldn’t materialize in time, would be quickly outmaneuvered by machine intelligence or could be bought off, perhaps, by talk of basic-income payments or thin promises of curing cancer.

But last month, when a Molotov cocktail was thrown at Altman’s San Francisco property, the human backlash landed literally on his doorstep. A few days later, Altman’s home endured another attack, this time by gunfire. It was hard not to think of the killing of the UnitedHealthcare chief executive Brian Thompson, which Luigi Mangione is charged with. The writer Jasmine Sun called this “A.I. populism’s warning shots.”

Americans still worry about the local impacts of data centers, storming to town halls en masse to protest them. They still worry about job loss and economic turmoil too, as do a growing number of politicians with their fingers lifted to the wind. But to many, the biggest A.I. labs now loom like the new faces of American oligarchy, as well — a fearsome concentration of economic and social power producing a self-compounding pattern of extreme inequality of the kind that has lacerated American life for decades. If the future lies with A.I., as we are so often told, it is unsettling to many and outrageous to some that so few people seem to stand in such absolute control of it.

In one sense, the vision peddled by A.I. companies is remarkably depersonalized: We hand more and more responsibility and judgment off to superintelligent black boxes, which rapidly begin shaping the course of the human future with decisions that remain illegible to the rest of us, including their designers. “People outside the field are often surprised and alarmed to learn that we do not understand how our own A.I. creations work,” Anthropic’s Dario Amodei wrote last year. “They are right to be concerned: This lack of understanding is essentially unprecedented in the history of technology.”

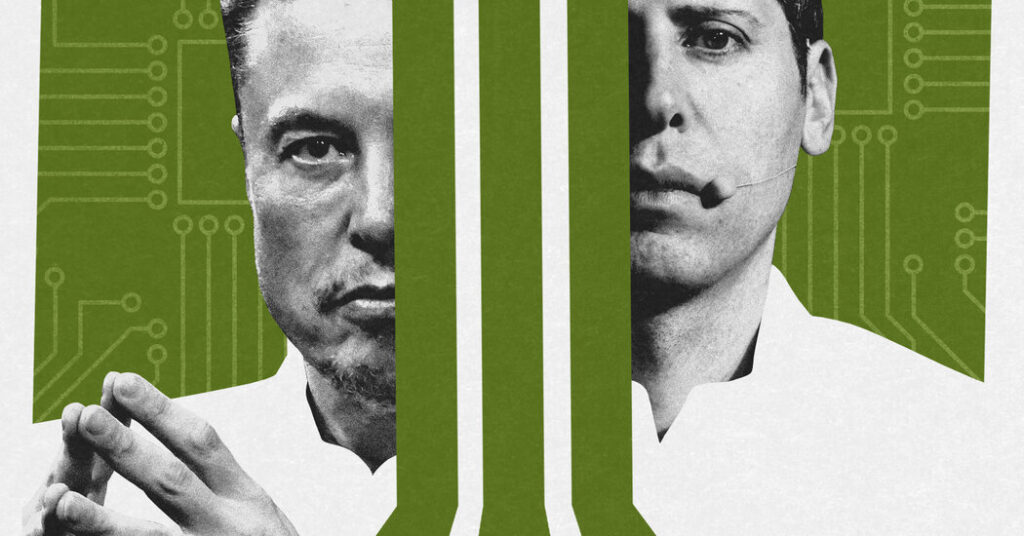

In another sense, and in the meantime, A.I. represents perhaps the most personalized sales pitch ever foisted on the passive American consumer — a vision of a near-total takeover of the country’s economic, social and cognitive lives by tools engineered by just five companies, run by five particular people, several of whom are widely described as sociopaths. The list is so short that you may know most of them by first name: Sam, Dario, Elon and Mark. (Demis Hassabis, who runs Google’s DeepMind, is perhaps less famous.)

These men are all already billionaires, or close to it, and on their current trajectories their wealth and influence look set to expand exponentially as, around them, anti-elitism multiplies, too. Perhaps this is one reason 50 percent of Americans told the Pew Research Center last year they were more concerned than excited about what’s to come from A.I. Only 10 percent said they were more excited. That is a yawning gap into which an entire society is being asked to tumble.

In 2026, A.I. discourse, like A.I. capability, jumps forward almost by the week. But perhaps the most memorable thing I’ve read about the shape of what’s to come remains an essay published back in 2017, by the science-fiction writer Ted Chiang, on BuzzFeed News. OpenAI had been founded just two years before; neither Elon Musk nor Amodei had yet split off on their own, and Mark Zuckerberg was almost a decade from his desperate A.I. spending spree. But already apocalyptic evangelists like Musk were warning the National Governors Association that “A.I. is a fundamental risk to the existence of human civilization” — by which he meant the possibility that superpowered A.I. might decide that the purpose of existence was the manufacturing of paper clips or the harvesting of strawberries, making everything else, including humans, irrelevant.

“This scenario sounds absurd to most people, yet there are a surprising number of technologists who think it illustrates a real danger,” Chiang wrote. “When Silicon Valley tries to imagine superintelligence, what it comes up with is no-holds-barred capitalism.”

Today, the United States is in the middle of a notorious cost-of-living crisis fueled in large part by a housing shortage of perhaps 10 million units, and last year, the country spent more money building A.I. infrastructure than single-family homes. We built 10 times as many data centers as the next biggest builder (Germany). We invested more than 20 times as much money into A.I. as the world’s next biggest investor (China). Among other things, artificial intelligence is an enormously big bet for the American economy to have made.

And though it isn’t all that hard to imagine a story in which the investment pays off, it’s also not hard to see a kind of parallel with those familiar A.I. parables, in which a godlike superintelligence chooses to prioritize paper-clip manufacturing or strawberry picking over all other human endeavors.

Yet you hear considerably less about near-term existential risk these days, even if it remains a pre-eminent concern among some researchers. Not that long ago, half of those surveyed said that there was at least a 10 percent risk that artificial intelligence would bring about human extinction. And you no longer hear much at all about the risk posed by A.I. helping to create biological weapons though large language models are now routinely dispensing advice for designing superbugs — much to the alarm of epidemiologists.

We’ve passed through a panic about A.I. slop and generative disinformation, though social media is inarguably awash in them still, and balance-sheet debates about the A.I. bubble have subsided for now, too.

And while there is still widespread worry about mass unemployment, for now the data on job loss is pretty ambiguous, and these days, economists tend to strike more reassuring notes about the possibility of large-scale job disruption. Increasingly, that line is echoed by A.I. leaders themselves, who in recent weeks have executed a rhetorical about-face to downplay the risk of mass unemployment.

This may look like corporate P.R., an effort to tamp down populist backlash after years of hyping up investors. But because the outreach features the small set of familiar faces now reassuring us about the future of work and the future of war, not to mention the futures of medicine and companionship and coding, it’s hard to escape the impression that these same people are basically in charge of everything now. At a recent conference staged by the Palantir Foundation at Yale, Dean Ball, the policy wonk who was an architect of the Trump administration’s original A.I. policy, offered a chilling prophecy, describing A.I. as “this giant acid vat” which would dissolve the mediating institutions most Americans see as “society.” “It will not be A.I. in government,” Ball predicted. “It’s going to be A.I. as governments.” A survey of people in 30 countries last year found that Americans were among the most nervous about A.I., and that nobody trusted their government to regulate A.I. less than we did.

This week, the White House signaled that it may make a sudden and dramatic U-turn on A.I. policy — once inclined toward hands-off support industry growth, the administration is now floating a proposal to force federal review of all new proprietary models before release. And Americans are drawing lines in the sand where they can, too. In September 2025, Americans seemed roughly ambivalent about the construction of new data centers in their communities, according to Heatmap polling, with voters 2 points more likely to support new construction than to oppose it. Four months later, in February of 2026, they were 24 points more likely to oppose it. That is a shockingly large swing in public opinion.

Northern Virginia is ground zero for the breakneck build-out of data centers, and between 2023 and 2025, voters there swung 69 points against building them in their own communities — from 45 points in favor to 24 points against. This is all the more remarkable given that in Loudon County, the true center of activity, data centers are expected to generate nearly half of all local tax revenue in 2027 — $1.3 billion of the $2.9 billion the county expects to bring in that year, County Supervisor Kristen Umstattd recently told the magazine City Journal’s Judge Glock. “For years, pundits have lamented that America no longer builds things,” Glock observed. “Yet under our noses, one of the great building booms in American history has unfolded” — a speculative infrastructure expansion comparable to the interstate-highway push of the 1960s and 1970s, if not quite as heady and heedless as the railroad boom that defined 19th-century America and that gave us the familiar robber barons that populate our cartoon memories of the first Gilded Age.

Perhaps it should not be surprising that according to a recent Quinnipiac poll, the only income bracket with hopeful views of the technology for their day-to-day lives was those making over $200,000 per year.

For the last few years, A.I. has felt like a breakneck race. Or, perhaps, one race after another: races between the leading companies, between those companies and regulators and lobbyists, between knowledge workers and the machine agents which may replace them, between the American industry and the Chinese one. All in their way presume a certain kind of finish line, a point past which progress proceeds so rapidly that any advantage — by a model, a company, a country — will be extended over time.

This end point has been called “artificial general intelligence” or “artificial superintelligence.” Many in the industry now talk about an interim phase of “recursive self-improvement,” in which A.I. starts to independently improve its own source code. Plenty of A.I. researchers believe it is right around the corner; Anthropic’s Jack Clark predicted this week that fully independent recursive self-improvement might be less than two years away. But less than two years ago, Bay Area venture capitalists were already giddily asking one another about whether they could “feel the A.G.I.”

And maybe we’re still on track for that. In the meantime, you’re more likely to hear pragmatic conversations about the thorny problem of what is called “diffusion”: the speed and shape of public uptake as new models spread out into the world beyond the lab, finding users and uses, hitting human bottlenecks and real-world obstacles and requiring new strategies or more narrowly trained models to navigate through or around them.

This is a pretty different vision, in which A.I. may continue to rapidly progress, even transform much of our lives, but without all the power necessarily accruing to the leading labs or the five individuals in charge of them. In this view, the state-of-the-art achievements of world-class models matter less than who is using A.I. and for what.

In April, to great fanfare, Anthropic refused to release a new model, Claude Mythos, which the company said could find and exploit security vulnerabilities in every tested piece of software, including those used in critical pieces of global I.T. infrastructure. It’s not exactly clear how far ahead Mythos really was on these benchmarks, but it appears to have inspired the White House’s apparent about-face. Yet six months from now, there will inevitably be an open-source version of Mythos, perhaps not quite as good but much cheaper to produce, that many more users around the world will have access to — and be able to customize to their own purposes. Maybe the state-of-the-art models will win out, in competitions like this, with the leading labs staying far enough ahead of ragtag upstarts to protect themselves. But if this is a race, it doesn’t have an obvious finish line, and it doesn’t really seem winner-take-all. The political scientist Jeffrey Ding calls it a “diffusion marathon.”

This is what A.I. people mean when they sometimes describe A.I. as a “general-purpose technology,” like steam engines, electricity or, more recently, computers and the internet. Some of the inventors and entrepreneurs who developed and refined those technologies made huge fortunes in their time, disrupting quite a lot of the world they inherited and giving us, ultimately, much of the world we ourselves live in. But none of them retained absolute control over those technologies for very long, let alone over the long-run future they unleashed. We still know the names of the robber barons, and live still somewhat in their shadows. But we are not their serfs. Are we sure A.I. will be different?

The post A.I. Populism Is Here. And No One Is Ready. appeared first on New York Times.