Lawyers keep getting burned by artificial intelligence that invents cases and makes up quotes. Now, some attorneys are naming the software they used.

Last month, a Louisiana personal injury lawyer apologized after submitting briefs that cited a real court decision but quoted passages that didn’t exist. The mistakes appeared in two filings in the 19th Judicial District Court in Baton Rouge and were flagged by opposing counsel.

“I’m trying to understand how I made this mistake,” Ross LeBlanc, a partner at Dudley DeBosier, wrote in a private letter to Judge William Jorden on March 27. Earlier this year, he said, he began using an artificial intelligence program called Eve to draft pleadings. At first, he checked the citations often. “They were always correct when I checked them,” he wrote.

That consistency gave him confidence, and eventually, he stopped checking, he said.

“I never thought this could happen to me,” LeBlanc wrote, adding that he could not be sure whether the mistake involved Eve’s software or if he copied and pasted something too hastily.

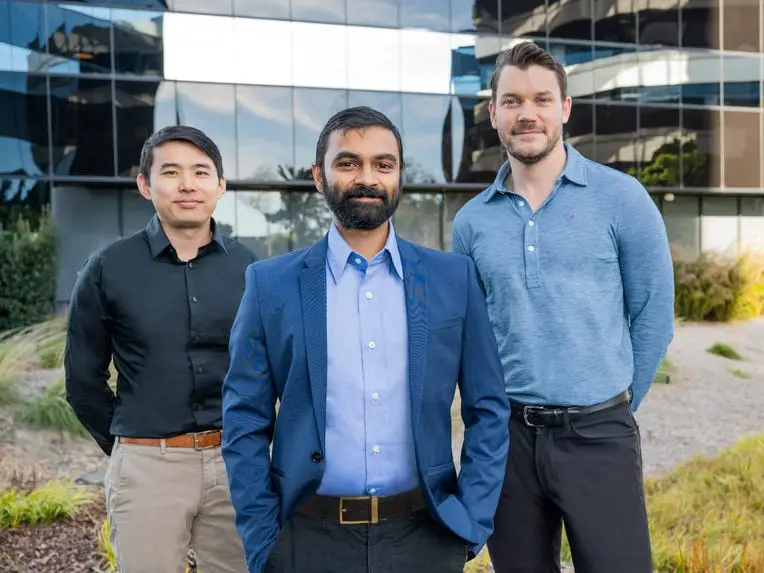

Jay Madheswaranm, Eve’s chief executive, told Business Insider on Thursday that after a close audit of the case with Dudley DeBosier, the company confirmed Eve “did not hallucinate any case citations in this matter,” including any fabricated quotations.

Courts have slapped sanctions on attorneys for filing briefs with errors created by artificial intelligence — often called “hallucinations.” Last week, Sullivan & Cromwell, one of the country’s oldest and most elite law firms, apologized to a federal judge over a similar slip-up.

What’s new here is the blame game. When an attorney names the tools involved, the companies behind the software are put in the spotlight and could face reputational repercussions.

Legal software companies like Harvey, Legora, and Eve have raised billions of dollars on the promise that they can make lawyers faster — and offer firms a level of reliability that general-purpose tools can’t match. If their software starts to embarrass customers in court, that trust erodes.

Damien Charlotin, a French researcher who tracks hallucinations in court filings, estimates that fewer than 10% of cases identify the software used. Many lawyers, he suspects, keep that part private because they’re relying on free chatbots like ChatGPT or other off-the-shelf tools that may not be authorized for client work.

Last year, a Latham & Watkins lawyer defending Anthropic in a copyright lawsuit made headlines after citing an article that does not exist. The lawyer said the mistake stemmed from using Anthropic’s own chatbot, Claude, which fabricated an article title and authors.

Eve builds software for plaintiff-side lawyers using large language models, helping them draft documents, map out medical histories, and send and respond to discovery requests. The company was valued at $1 billion after it raised a $103 million funding round about a year ago. Madheswaranm said Eve now processes more than 200,000 documents and other results a month — up around 100-fold from a year ago.

LeBlanc told the judge that he had been wary of the technology generally because of the “horror stories” about hallucinated case law. He said he was persuaded after Eve pitched the tool to his firm and assured attorneys it had safeguards to reduce errors. He believed the risk was limited as long as he conducted his own legal research and directed the software to rely only on approved sources.

Then, opposing counsel in the personal injury case pointed out his mistakes.

LeBlanc’s apology surfaced this month in a separate case involving a trip-and-fall at a Lowe’s store. The opposing counsel found hallucinations in a brief filed by Dudley DeBosier and included LeBlanc’s letter in a request urging the court to expand its inquiry into possible sanctions.

Dudley DeBosier has filed a motion to strike opposing counsel’s request because it says the cases are unrelated. The firm also indicated that a lawyer used Claude to help draft the brief in the Lowe’s case.

It’s a view widely shared across software companies and law firms that artificial intelligence can assist in research and drafting, but responsibility for the final product remains with the human who signs the filing.

Madheswaran said Eve makes that explicit in its contracts and onboarding with new customers. The software also includes features designed to catch errors before they reach a courtroom, though they don’t always work. Some errors are harder to spot than others, he said. Confirming a case exists is easier than verifying a quote is exact.

As the legal profession races to adopt artificial intelligence, mistakes are more likely to be caught. Courts are getting wiser to the technology, and opposing counsel are adjusting their tactics. Instead of only attacking legal arguments, lawyers are scanning filings for errors that could undermine the other side’s credibility.

Chad Dudley, a founding partner of Dudley DeBosier, a firm with about 40 attorneys, said it trains its lawyers to carefully review generated results and requires them to agree to use the technology responsibly.

For his part, LeBlanc said he hopes other lawyers learn from his mistake. He told Business Insider on Thursday that Eve helped him move faster under time pressure, but after the errors surfaced, he felt “sick to my stomach” and couldn’t sleep.

“I’m responsible for checking everything, no matter what technology comes along,” he said.

He doesn’t blame Eve for the blunder. Still, he’s setting the tool down for now.

“I feel like, given what happened,” he said, “it’s fair to have a cooling off period, you know, touch grass.”

Have a tip? Contact this reporter via email at [email protected] or Signal at @MeliaRussell.01. Use a personal email address and a non-work device; here’s our guide to sharing information securely.

Read the original article on Business Insider

The post The blame game over AI hallucinations in court filings has started appeared first on Business Insider.