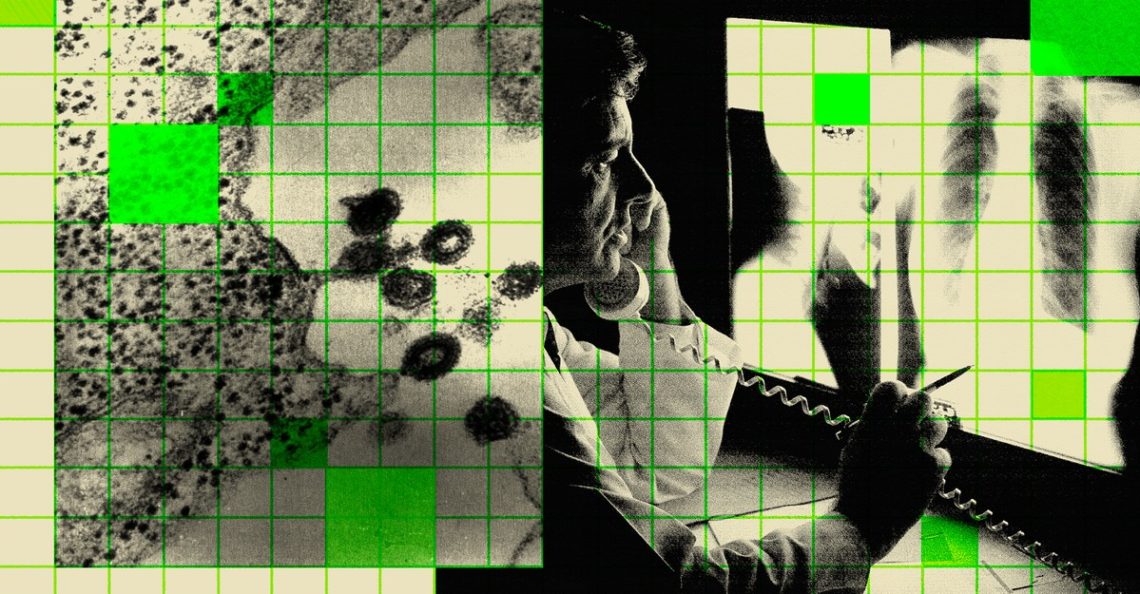

To hear Silicon Valley tell it, the end of disease is well on its way. Not because of oncology research or some solution to America’s ongoing doctor shortage, but because of (what else?) advances in generative AI.

Demis Hassabis, a Nobel laureate for his AI research and the CEO of Google DeepMind, said on Sunday that he hopes that AI will be able to solve important scientific problems and help “cure all disease” within five to 10 years. Earlier this month, OpenAI released new models and touted their ability to “generate and critically evaluate novel hypotheses” in biology, among other disciplines. (Previously, OpenAI CEO Sam Altman had told President Donald Trump, “We will see diseases get cured at an unprecedented rate” thanks to AI.) Dario Amodei, a co-founder of Anthropic, wrote last fall that he expects AI to bring about the “elimination of most cancer.”

These are all executives marketing their products, obviously, but is there even a kernel of possibility in these predictions? If generative AI could contribute in the slightest to such discoveries—as has been promised since the start of the AI boom—where would the technology and scientists using it even begin?

I’ve spent recent weeks speaking with scientists and executives at universities, major companies, and research institutions—including Pfizer, Moderna, and the Memorial Sloan Kettering Cancer Center—in an attempt to understand what the technology can (and cannot) do to advance their work. There’s certainly a lot of hyperbole coming from the AI companies: Even if, tomorrow, an OpenAI or Google model proposed a drug that appeared credibly able to cure a single type of cancer, the medicine would require years of laboratory and human trials to prove its safety and efficacy in a real-world environment, which AI programs are nowhere near able to simulate. “There are traffic signs” for drug development, “and they are there for a good reason,” Alex Zhavoronkov, the CEO of Insilico Medicine, a biotech company pioneering AI-driven drug design, told me.

Yet Insilico has also used AI to help design multiple drugs that have successfully cleared early trials. The AI models that made Hassabis a Nobel laureate, known as AlphaFold, are widely used by pharmaceutical and biomedical researchers. Generative AI, I’ve learned, has much to contribute to science, but its applications are unlikely to be as wide-ranging as its creators like to suggest—more akin to a faster engine than a self-driving car.

There are broadly two sorts of generative AI that are currently contributing to scientific and mathematical discovery. The first are essentially chatbots: tools that search, analyze, and synthesize scientific literature to produce useful reports. The dream is to eventually be able to ask such a program, in plain language, about a rare disease or unproven theorem and receive transformative insights. We’re not there, and may never be. But even the bots that exist today, such as OpenAI’s and Google’s separate “Deep Research” products, have their uses. “Scientists use the tools that are out there for information processing and summarization,” Rafael Gómez-Bombarelli, a chemist at MIT who applies AI to material design, told me. Instead of Googling for and reading 10 papers, you can ask Deep Research. “Everybody does that; that’s an established win,” he said.

Good scientists know to check the AI’s work. Andrea Califano, a computational biologist at Columbia who studies cancer, told me he sought assistance from ChatGPT and DeepSeek while working on a recent manuscript, which is now a normal practice for him. But this time, “they came up with an amazing list with references, people, authors on the paper, publications, et cetera—and not one of them existed,” Califano said. OpenAI has found that its most advanced models, o3 and o4-mini, are actually two to three times more likely to confidently assert falsehoods, or “hallucinate,” than their predecessor, o1. (This was expected for o4-mini, because it was trained on less data, but OpenAI wrote in a technical report that “more research is needed to understand” why o3 hallucinates at such a high rate.) Even when AI research agents work perfectly, their strength is summary, not novelty. “What I don’t think has worked” for these bots, Gómez-Bombarelli said, “is true, new reasoning for ideas.” These programs, in some sense, can fail doubly: Trained to synthesize existing data and ideas, they invent; asked to invent, they struggle. (The Atlantic has a corporate partnership with OpenAI.)

To help temper—and harness—the tendency to hallucinate, newer AI systems are being positioned as collaborative tools that can help judge ideas. One such system, announced by Google researchers in February, is called the “AI co-scientist”: a series of AI language models fine-tuned to research a problem, offer hypotheses, and evaluate them in a way somewhat analogous to how a team of human scientists would, Vivek Natarajan, an AI researcher at Google and a lead author on the paper presenting the AI co-scientist, told me. Similar to how chess-playing AI programs improved by playing against themselves, Natarajan said, the co-scientist comes up with hypotheses and then uses a “tournament of ideas” to rank which are of the highest quality. His hope is to give human scientists “superpowers,” or at least a tool to more rapidly ideate and experiment.

The usefulness of those rankings could require months or years to verify, and the AI co-scientist, which is still being evaluated by human scientists, is for now limited to biomedical research. But some of its outputs have already shown promise. Tiago Costa, an infectious-disease researcher at Imperial College London, told me about a recent test he ran with the AI co-scientist. Costa and his team had made a breakthrough on an unsolved question about bacterial evolution, and they had not yet published the findings—so it could not be in the AI co-scientist’s training data. He wondered whether Google’s system could arrive at the breakthrough itself. Costa and his collaborators provided the AI co-scientist with a brief summary of the issue, some relevant citations, and the central question they had sought to answer. After running for two days, the system returned five relevant and testable hypotheses—and the top-ranked one matched the human team’s key experimental results. The AI appeared to have proposed the same genuine discovery that they had made.

The system developed its top hypothesis with a simple rationale, drawing a link to another research area and coming to a conclusion the human team had taken years to arrive at. The humans had been “biased” by long-held assumptions about this particular phenomenon, José Penadés, a microbiologist at ICL who co-led the research with Costa, told me. But the AI co-scientist, without such tunnel vision, had found the idea by drawing straightforward research connections. If they’d had this tool and hypothesis five years ago, he said, the research would have proceeded significantly faster. “It’s quite frustrating for me to realize it was a very simple answer,” Penadés said. The system did not concoct a new paradigm or unheard-of notion—it just efficiently considered a large amount of information, which turned out to be good enough. With human scientists having already produced, and continuously producing, tremendous amounts of knowledge, perhaps the most useful AI will not automate that ability so much as complement it.

The second type of scientific AI aims, in a sense, to speak the language of biology. AlphaFold and similar programs are trained not on internet text but on experimental data, such as the three-dimensional structure of proteins and gene expression. These types of models quickly apply patterns drawn from more data than even a large team of human researchers could analyze in a lifetime. More traditional machine-learning algorithms have, of course, been used in this way for a long time, but generative AI could supercharge these tools, allowing scientists to find ways to repurpose an older drug for a different disease, or identify promising new receptors in the body to target with a therapy, to name two examples. These tools could substantially increase both “time efficiency and probability of success,” Sriram Krishnaswami, the head of scientific affairs at Pfizer Oncology, told me. For instance, Pfizer has used an internal AI tool to identify two such targets that might help treat breast and prostate cancer, which are currently being tested.

Similarly, generative-AI tools can contribute to drug design by helping scientists more efficiently balance various molecular traits, side effects, or other factors before going to a lab or trial. The number of configurations and interactions for any possible drug is profoundly large: There are 10632 sequences of mRNA that could produce the spike protein used in COVID vaccines, Wade Davis, Moderna’s head of digital for business, including the AI-product team, told me. That’s dozens of orders of magnitude beyond the number of atoms in the universe. Generative AI could help substantially reduce the number of sequences worth exploring.

“Possibly there will never be a drug which is ‘discovered’ through AI,” Pratyush Tiwary, a chemical physicist at the University of Maryland who uses AI methods, told me. “There are good companies that are working on it, but what AI will do is to help reduce the search space”—to reduce the number of possibilities scientists need to investigate on their own. These AI models are to biologists like a graphic calculator and drafting software are to an engineer: You can ideate faster, but you still have to build a bridge and confirm that it won’t crumble before driving across it.

The ultimate achievement of AI, then, may just be to drastically improve scientific efficiency—not unlike chatbots already used in any number of normal office jobs. When considering “the whole drug-development life cycle, how do we compress time?” Anaeze Offodile II, the chief strategy officer at MSK, told me. AI technologies could shave years off of that life cycle, though still more years would remain. Offodile imagined a reduction “from 20 years to maybe 15 years,” and Zhavoronkov, of Insilico, said that AI could “help you cut maybe three years” off the total process and increase the probability of success.

There are, of course, substantial limitations to these biological models’ capabilities. For instance, though generative AI has been very successful in determining protein structure, similar programs frequently suggest small molecule structures that cannot actually be synthesized, Gómez-Bombarelli said. Perhaps the biggest bottleneck to using generative AI to revolutionize the life sciences—making useful predictions about not just the relatively constrained domain of how a protein will fold or bind to a specific receptor, but also the complex cascade of signals within and between cells across the body—is a scarcity of high-quality training data gathered from relevant biological experiments. “The most important thing is not to design the best algorithm,” Califano said. “The most important thing is to ask the right question.” The machines need knowledge to begin with that they cannot, at least for the foreseeable future, generate by themselves.

But perhaps they can with human collaborators. Gómez-Bombarelli is the chief science officer of materials at Lila Sciences, a start-up that has built a lab with equipment that can be directed by a combination of human scientists and generative AI, allowing models to test and refine hypotheses in a loop. Insilico has a similar robotic lab in China, and Califano is part of a global effort led by the Chan Zuckerberg Initiative to build an AI “virtual cell” that can simulate any number of human biological processes. Generating “novel” ideas is not really the main issue. “Hypotheses are cheap,” Gómez-Bombarelli said. But “evaluating hypotheses costs millions of dollars.”

Throwing data into a box and shaking it has yielded incredible results in processing human language, but that won’t be enough to treat disease. Humans designing science-boosting AI models have to understand the problem, ask appropriate questions, and curate relevant data, then experimentally verify or refute any resultant AI system’s outputs. The way to build AI for science, in other words, is to do some science.

The post How AI Will Actually Contribute to a Cancer Cure appeared first on The Atlantic.