Memory chips have become the most valuable commodity in the AI economy.

The Philadelphia Stock Exchange Semiconductor Index has leaped 60% in six weeks, and Micron—a memory chipmaker—surged 38% last week alone, its best week since 2008. Retail traders have caught on and piled into the rally at the highest level in a year that same week, per JPMorgan.

But Willy Shih is not so sure. The Harvard Business School professor, who has tracked semiconductor cycles since the 1980s, told Fortune the AI memory boom looks like every other memory cycle he has watched—just bigger.

“Anytime people show me these curves that just go to the sky with no end, that never continues forever,” he said. “This too will pass.”

What memory is, and why it’s suddenly worth so much

Every “computer”—your laptop, phone, Switch 2, or AI server—needs memory. It is part of the computing system that holds the data and instructions programmed into it while another function is running; without it, processors have nothing to work on.

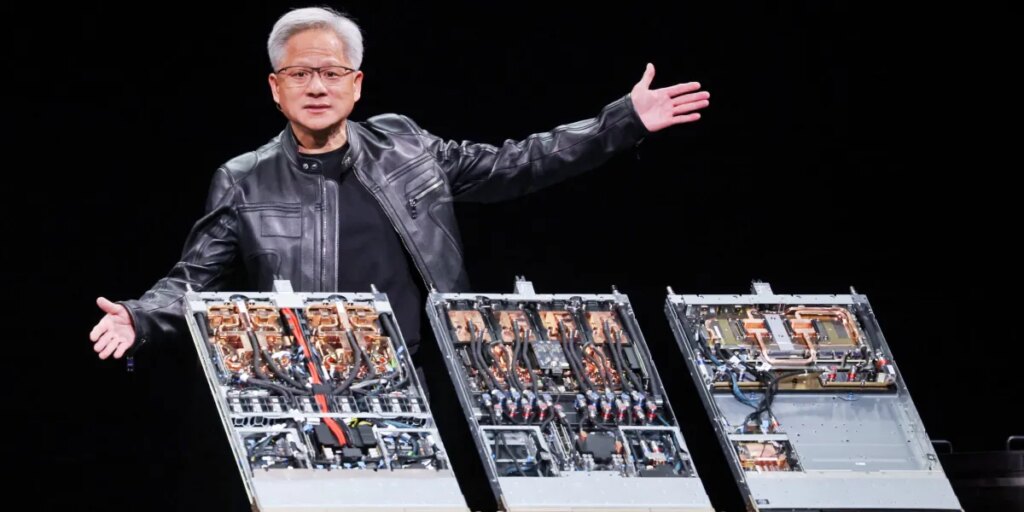

Memory comes in a few different flavors. DRAM, or dynamic random access memory, is the standard chip that’s used for consumer devices like the phones. But recently, companies have demanded a high-bandwidth memory chip, or HBM, used inside AI accelerators like Nvidia’s GPUs. They’re needed because AI workloads are memory-hungry in a way ordinary computing is not: a single AI server requires roughly eight to 10 times the DRAM of a traditional server, and far more high-bandwidth memory than any consumer device.

For decades, memory was the cheapest thing about a computer to upgrade. It got better and cheaper every year, on a trend that looks something like Moore’s Law—Gordon Moore’s 1965 observation that the number of transistors on a chip would roughly double every two years. Memory followed its own version of that curve; more capacity for the same money, year after year, even with boom-bust cycles.

“Historically, it has always been you get more memory for the same money,” said Shih, who teaches supply chain strategy at Harvard Business School. “We have never seen price increases like we have over the last six months.”

DRAM contract prices, per TrendForce, are projected to rise 58%-63% quarter over quarter in Q2, the steepest jump in a decade. Samsung, the largest memory maker in the world, has said its pricing rose 90% in the first quarter alone.

How AI broke the supply chain

Shih likened the dynamic to the current scramble for limited oil supplies as the Strait of Hormuz crisis grows.

Memory makers operate “fabs”—short for fabrication facilities—that produce a finite number of those silicon wafers each year. Those wafers can be allocated to DRAM or HBM, but the total is fixed. HBM is the most profitable, and the three big players have reallocated capacity toward it at the expense of everything else. Servers now account for 60%-70% of memory demand, up from around 30% before the AI boom, according to analysts at Jefferies.

The squeeze means makers of consumer products that use memory, like iPhones or computers, will have to raise prices, just like Nintendo did on the Switch 2.

Adding to the competition for limited supplies is the world’s biggest chipmaker by market cap.

Nvidia announced last October that it will use LPDDR5—a low-power version of consumer DRAM—for its inference GPUs by the end of 2026, because the chip is more power-efficient than the server memory it currently relies on. The shift means Nvidia is now bidding for the same memory pool as Apple, Samsung, and every Android maker.

“It has already almost doubled the price for memory that goes into servers,” Shih said.

A Jefferies note from late March by tech analyst Edison Lee forecasts a 31% year-over-year decline in global smartphone shipments over the next 12 months—a contraction without precedent outside the pandemic. Already, entry-level 5G phones in India have risen about 30% since October. Sony raised PlayStation 5 prices by up to $150 in March.

Apple, by far the most popular smartphone maker in the world, hasn’t escaped either. CEO Tim Cook warned analysts last quarter that gross margins will continue to decline due to memory pricing.

The crash

The next thing that will happen, Shih said, is what always happens at the end of a memory cycle. For the last 60 years, companies raced to add capacity when prices were high, then capacity arrived at the same time, and prices crashed.

Despite Wall Street’s insistence that this time is different, Shish said: “Same cycle, except bigger amplitude.”

The question is when the crash comes. New fabs from Samsung, SK Hynix, Micron, and Kioxia, the top memory companies, are not expected to reach volume production until late 2027 or 2028.

Shih recalled a conversation with a memory maker about three years ago, when the industry was unprofitable and the executive was waiting for the right part of the cycle to break ground on a new fab.

“Actually, the right time to have started building that fab would have been when we talked about it three years ago,” Shih said. The industry, in other words, is always late.

The post Wall Street thinks memory is AI’s golden ticket. Harvard’s chip expert warns: ‘Curves that just go to the sky with no end…never continue forever’ appeared first on Fortune.