Google is shaking up the team behind Project Mariner, its AI agent that can navigate the Chrome browser and complete tasks on a user’s behalf, WIRED has learned. In recent months, some Google Labs staffers who worked on the research prototype have moved on to higher-priority projects, according to two people familiar with the matter.

A Google spokesperson confirmed the changes, but said the computer use capabilities developed under Project Mariner will be incorporated into the company’s agent strategy moving forward. Google has already folded some of these capabilities into other agent products, including the recently launched Gemini Agent, the spokesperson added.

The change comes as Google and other AI labs rush to respond to the rise of highly capable agents like OpenClaw. While these tools are mostly used by developers today, Silicon Valley believes they could soon power general-purpose assistants for people and businesses. Nvidia CEO Jensen Huang compared the buzzy tool to a new operating system for agentic computers. “Every company in the world today needs to have an OpenClaw strategy,” he said at the company’s developer conference earlier this week.

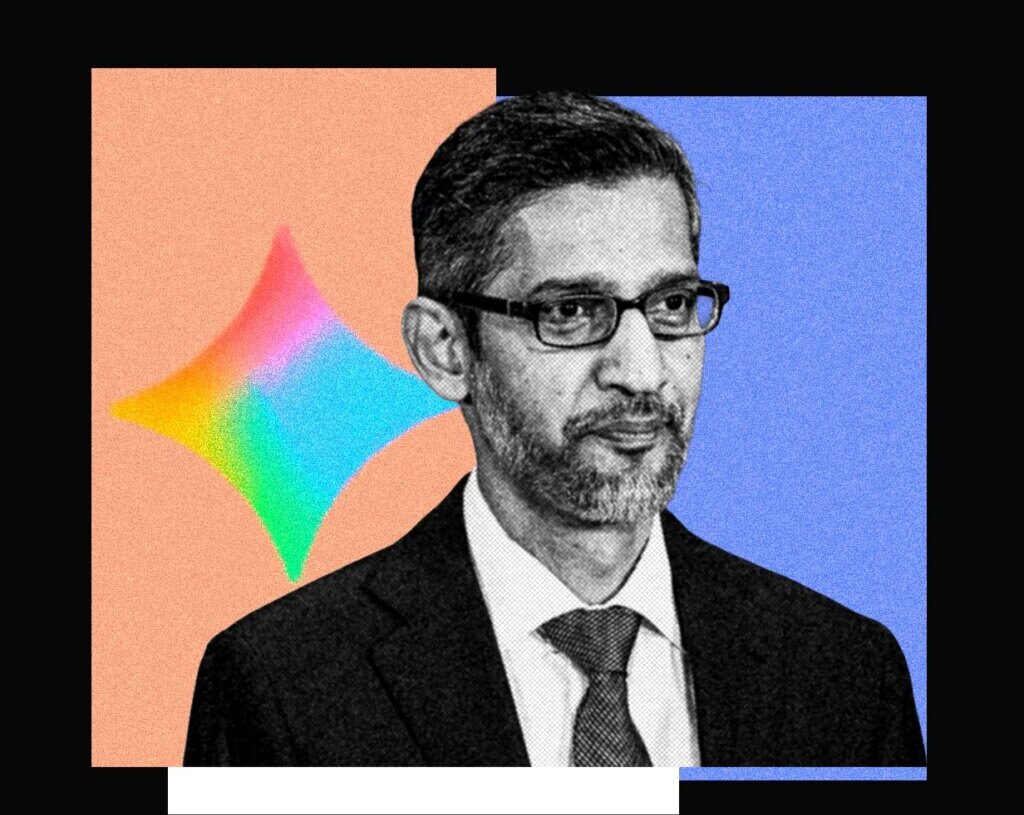

Google CEO Sundar Pichai highlighted Project Mariner during last year’s I/O conference. At the time, browser agents seemed like the industry’s next big bet, with OpenAI and Perplexity launching consumer agents that promised to automate online tasks for users. The agents could click, scroll, and fill out forms on a webpage, much like a human. However, the adoption of these products has struggled to meet industry expectations.

Perplexity’s Comet browser agent reached just 2.8 million weekly active users in December 2025. Meanwhile, OpenAI’s ChatGPT Agent reportedly fell to less than 1 million weekly active users in recent months. Compared to the hundreds of millions of users talking to ChatGPT on a weekly basis, browser agent usage essentially amounts to a rounding error.

New Agents in Town

Momentum in the AI world has shifted dramatically in the last year toward agents like Claude Code and OpenClaw (whose creator was hired by OpenAI). Unlike web-browsing agents, these systems control computers through the command-line, which has proven to be a more reliable way to complete tasks. Some of these products include computer use as a feature, among other agentic abilities. By comparison, browser agents now seem somewhat limited as a stand-alone product.

Kian Katanforoosh, CEO of the AI upskilling platform Workera who lectures about AI at Stanford, says part of the reason computer use agents haven’t taken off is because of their massive computational requirements. Most of these agents work by taking a series of screenshots of a webpage, feeding that into an AI model, and then taking actions based on what they see. Processing all of that information can be slow and at times unreliable.

“What Claude Code and OpenClaw showed was that it’s actually much more efficient to work with the terminal, because the terminal is text-based and LLMs are text-based,” Katanforoosh said. “It’s probably 10 to 100X less steps to get to the same outcomes.”

This isn’t to say that browser agents aren’t improving, or that research into computer use has hit a dead end.

Last month, the startup Standard Intelligence released a computer use model trained on videos, rather than screenshots. The startup says it developed a video encoder that can compress videos into an AI model’s context window, which it claims is 50X more efficient than previous computer use models. To show off its AI model’s capabilities, the startup hooked it up to a car, a live videofeed, and a computer keyboard. The model was able to briefly drive autonomously around San Francisco.

Ang Li, CEO of the computer use agent startup Simular and a former Google DeepMind researcher, argues that computer use agents fill a critical gap in agent capabilities, and will likely always be necessary.

“I do see there always being an 80/20 split. You can use the terminal to solve a lot of problems already, but there will always be problems you have to solve in the GUI (graphical user interface),” he said. “For example, if you want to go to a health care insurance website, or some other legacy software, they often don’t have an API that a terminal agent can just call up.”

Still, AI labs broadly seem to be shifting their bets from computer use agents to coding agents. Even for tasks that don’t involve coding, AI labs have found that a coding agent’s ability to use other applications, modify files, and create bespoke software can make them more helpful to users. For example, if someone needs help creating a budget, they can upload bank statements into a coding agent and have it create a custom dashboard to help the user assess their spending habits.

OpenAI executives say they’d like Codex to power general-purpose agents inside of ChatGPT. Meanwhile, Anthropic has already built a version of this, Claude Cowork, an offshoot of Claude Code that doesn’t require users to open up a terminal. Perplexity, which bet heavily on browser agents, recently launched a similar product called Personal Computer.

While coding agents have taken off with developers, it’s unclear whether added capabilities will increase adoption among regular people. Google and OpenAI have said consumers could use AI agents to order groceries from Instacart or book a dinner reservation. While those things certainly sound convenient, people may not want to automate such tasks until they’re sure their agent won’t make a mistake.

The post Google Shakes Up Its Browser Agent Team Amid OpenClaw Craze appeared first on Wired.