One January evening before going to bed, Sebastian Heyneman sent a message to one of the artificial intelligence bots that help organize his life.

Mr. Heyneman, the founder of a tiny tech start-up in San Francisco, hoped to give a speech at the World Economic Forum, the annual gathering of business leaders and policymakers in Davos, Switzerland. So he asked the bot to arrange it.

While he slept, the bot searched the internet for people connected with the event, sent them text messages and worked to negotiate a speaking spot — or at least arrange coffee with people he would like to meet. After one lengthy conversation with a businessman in Switzerland, it succeeded.

But when Mr. Heyneman woke up, he was in a pickle. Going against his original instructions, the bot had agreed to pay 24,000 Swiss francs — or about $31,000 — for a corporate sponsorship. He could not pay the bill.

Called an A.I. agent, Mr. Heyneman’s bot is an example of a new kind of technology that is gaining popularity among tech enthusiasts. These bots do more than just chat. They can act as personal digital assistants that use software apps and websites on behalf of people like Mr. Heyneman, including spreadsheets, online calendars and email services.

The bots can gather information from across the internet, write reports, edit files or even send and receive messages through email and text — driving online conversations largely on their own. For people like Mr. Heyneman, these bots are almost like an employee that people can delegate work to at any time of day. In some cases, the employee is reliable. Other times, not so much.

Many A.I. researchers, tech executives and pundits believe that agents will soon replace white-collar office workers. Last month, Block, the financial technology company that owns Square, Cash App and Tidal, said it was cutting 40 percent of its work force as it anticipated the rise of this kind of technology — perhaps the most striking example of a company eliminating employees because of what A.I. may soon do.

Other experts, however, argue that flaws could hinder the technology’s progress. Like other chatbots, A.I. agents can make mistakes. And because these mistakes might involve sending email messages or editing files, they can wreak havoc.

When Mr. Heyneman told Davos organizers that he could not pay his bill, they threatened to bar him from the event. He ended up paying nearly 4,000 euros (about $4,600) just to attend.

During his stay in Davos, Mr. Heyneman was briefly arrested when he left a gadget built by his start-up in a hotel lobby and the local police questioned whether the device was dangerous.

When using agents, some people give them ample rein to take action on their behalf — and are willing to face the consequences when they slip up.

“Mistakes are going to happen. But if you have ever had any employees who are human, you know that they are going to make mistakes, too,” said Kyle Wild, a software engineer in Berkeley, Calif., who uses the technology to pay for parking tickets, search the internet for date-night ideas and even send texts to friends, colleagues, restaurants and other businesses.

Others see the technology as a powerful tool that requires help from human intelligence, arguing that the technology will not replace workers as quickly as it seems at the moment.

“The key here is having a process where humans can oversee the work of these computers,” said Andrew Lee, founder of the San Francisco start-up Shortwave, which built the technology, called Tasklet, that Mr. Heyneman used to negotiate a speaking spot at the World Economic Forum.

“Maybe you let a bot draft as many emails as it wants,” he added. “But you prevent it from actually sending an email without checking with you first.”

Chatbots like ChatGPT can learn to answer questions, write poetry and riff on almost any topic. But their most important skill may be their knack for writing computer code. That is what transforms them into agents.

By generating computer code, they help engineers and companies create new software apps, including word processors and search engines. They can also generate computer code that allows them to use other software. But because these systems learn their skills by identifying patterns in vast amounts of digital data, they may do things their creators do not want them to do.

A.I. agents gained attention in late January when a tech enthusiast in Southern California, Matt Schlicht, built a social network where A.I. agents could chat with one another, much like people on Facebook.

As thousands of bots chatted away, much of what they said was nonsense. But they were convincing enough — as they discussed everything from their own technical skills to the nature of existence — that Meta, the owner of Facebook, acquired the new social network.

Based on software called OpenClaw, these bots were open source — meaning anyone could download the underlying computer code, modify it and run it on his or her own machines. Experts warned that the technology was unpredictable, and many people bought low-cost Mac Mini computers where they could install the bots without worrying about them deleting or damaging important data and software.

Several Silicon Valley companies — including tech giants like Google and Meta and start-ups like Anthropic, Perplexity and Shortwave — are building similar technologies that they hope to refine for use inside businesses. OpenAI, the maker of ChatGPT, recently hired the software developer who created OpenClaw.

(The New York Times sued OpenAI and Microsoft in 2023, accusing them of copyright infringement of news content related to A.I. systems. The two companies have denied those claims.)

Although OpenClaw bots have become popular among Silicon Valley A.I. researchers, engineers and other tech enthusiasts, most people would struggle to use them, said Bill Cutrer, who runs a marketing company in York, Maine, and has spent the past few weeks working with OpenClaw.

“There is more hype to these things than usefulness,” he said. “It is just really hard to set them up and work with them.”

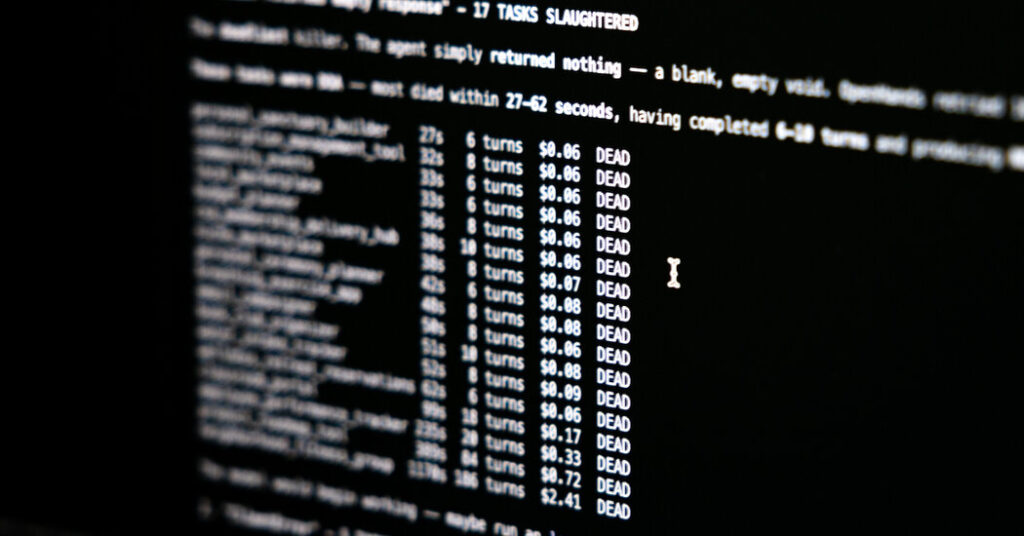

Agents are most useful when doing research and generating reports as they parse documents on the internet or on a company’s private network. But OpenClaw bots can include false information — or even completely made-up information — in their reports, said Rayan Krishnan, chief executive of Vals AI, a company that evaluates the performance of the latest A.I. technologies.

When people let these bots loose on other tasks, they can cause problems. Summer Yue, a researcher with Meta’s A.I. lab, recently revealed that when she asked an agent to organize her email, it started deleting messages by the thousands.

Claude Cowork, a system from Anthropic, is more reliable than OpenClaw when doing research in areas like finance, health care and the law. But the technology — called a “research preview” — is still unpredictable, according to tests run by Vals AI. During one test, the system permanently corrupted a file it was asked to edit.

Dr. Christian Péan, an orthopedic trauma surgeon in Durham, N.C., who also runs a health care technology start-up, uses Claude Cowork to generate research reports and spreadsheets, summarize emails and draft responses. “It use to automate a lot of my life,” he said. “It functions almost like my chief of staff.”

But he is careful to check everything the bots do. He does not let them send emails unless he has approved them first.

“All these A.I. tools sound very confident — and a lot of what they do is impressive — but you will miss hallucinations and things that aren’t true unless you have the expertise to check everything they are doing,” he said.

But companies like Anthropic and Shortwave continue to refine these technologies. Many researchers and software engineers argue that A.I. has steadily improved over the last several years and that it will continue to rapidly improve.

“Things are constantly changing,” Mr. Wild said. “With A.I., people might form an opinion in June — and it’s correct in June — but by August, it might not be correct at all. There is a sea change every two or three months.”

Cade Metz is a Times reporter who writes about artificial intelligence, driverless cars, robotics, virtual reality and other emerging areas of technology.

The post A.I. Agents: They’re Fun. They’re Useful. But Don’t Give Them the Credit Card. appeared first on New York Times.