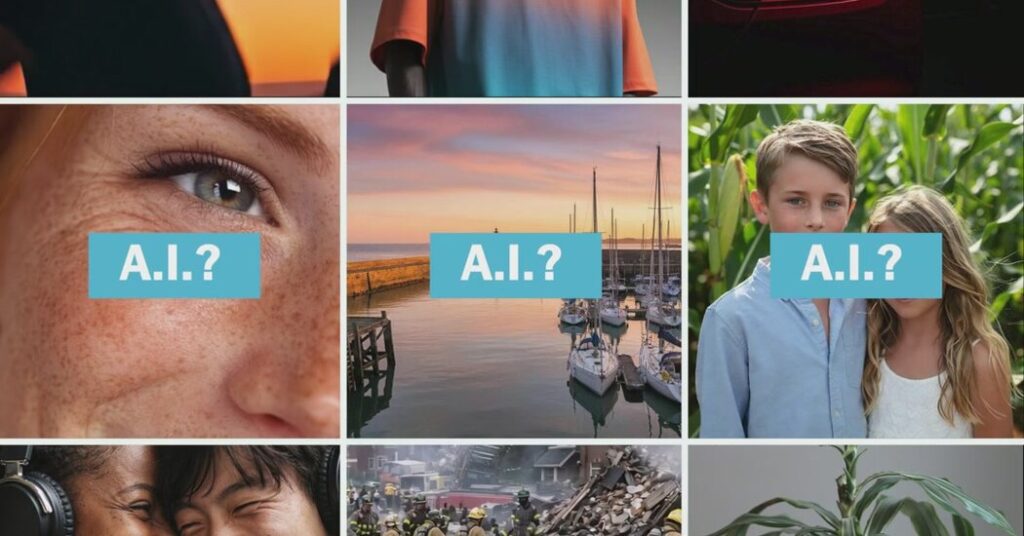

Content generated by artificial intelligence has become so lifelike that it’s often impossible to tell whether a video or an image floating through social media is real or fake.

Enter the A.I. detector.

More than a dozen online tools claim they can tell the difference between what’s real and what’s A.I. by looking for hidden watermarks, composition errors and other digital clues.

The reality is more mixed, according to a battery of tests conducted by The New York Times. While many tools did a good job detecting some A.I. content, they were not accurate enough to offer users complete confidence.

The findings suggest that these detectors can help confirm suspicions about A.I.-generated media, but it is hard to rely on any of them to make definitive rulings. That presents fresh challenges for internet users and fact-checkers trying to manage the A.I. fakery that has flooded social media in recent months.

Overall, we found that any conclusions drawn by the tools should be supported by other research, like details in official photographs or news reports.

Many people nevertheless see the detection tools, which now analyze not just imagery but also video and audio, as powerful arbiters of truth at a crucial moment when A.I.-generated content is coursing through social media and deceiving users during breaking news moments. The tools are being adopted by banks and insurance companies trying to spot A.I.-powered fraud, by teachers looking for plagiarism, and by internet sleuths trying to verify images and videos circulating through social media.

“You’re never going to have a detection tool that is able to 100 percent detect whether A.I. has been used in text, images, video, whatever form it is,” said Mike Perkins, a professor at British University Vietnam who studied A.I. detectors and found that text detectors were unreliable. As the A.I. generators improve, he said, A.I. detectors will struggle to catch up, creating an “arms race.”

Our tests reviewed more than a dozen A.I. detectors and chatbots capable of identifying fake video, audio, music and images, conducting more than 1,000 scans in all.

Here’s what we found.

Many could detect basic fakes.

Most A.I. fakes circulating online today do not take a lot of work to create: Users can type in simple prompts and receive a lifelike image or video of real people. That kind of content flooded the internet soon after Nicolás Maduro, Venezuela’s ousted president, was arrested in January.

To test this, we asked ChatGPT, the A.I. chatbot made by OpenAI, to create a photograph of two people laughing. It produced a lifelike image that nevertheless contained several markers of A.I. imagery: The lighting, composition and features were all a bit too perfect, not to mention that a hand seemed to ripple unnaturally.

Many of the A.I. detectors quickly spotted that the image was A.I.-generated, with a few exceptions. ChatGPT, for example, couldn’t detect the fake image that it had created just moments earlier. (The Times has sued OpenAI and its partner, Microsoft, claiming copyright infringement of news content related to A.I. systems. OpenAI and Microsoft have denied those claims.)

A.I. detectors are generally trained on huge collections of A.I.-generated works, learning to spot the digital signals left behind by the A.I. tools.

The Times shared the results of the tests with the A.I. detector companies. Many of them responded that no detector would be completely accurate all of the time. In a sign of how fast-moving the A.I. detection business is, several companies said they were on the brink of releasing major updates to their models that would perform better.

“This will be an ongoing battle of determining ‘Is this A.I. or not?’ for the foreseeable future,” wrote Anatoly Kvitnitsky, the chief executive of AI or Not, in an email. The company ran additional tests on the images that its public model failed to identify, finding that its newest model could correctly identify them as A.I.

They struggled with more complex images.

The detectors struggled more with images like this one: a fictional scene of a seaside port with very few markers that it’s A.I.-made. (Look closely, though, and you’ll notice that some of the boats connect to docks that go nowhere.)

This may be because some of the detectors are mostly trained to identify faces so they can be used for security and anti-fraud purposes.

Few detectors can analyze videos.

A.I. videos are rapidly becoming the next threat to social media. The release of Sora, an A.I. video generator app created by OpenAI, led to a surge of fake videos on social media — with few labels from social media companies indicating they were fake.

Only a few A.I. detectors are capable of analyzing video and audio. The detectors that could had mixed results.

Video and audio have emerged as key security threats for businesses: Imagine a call from a chief executive that was actually an A.I. replica of that person’s voice, or a videoconference with an A.I. character that looks real. Detection companies invested a lot of money to spot such fakery, offering the ability to identify whether video, audio or music is A.I.-made, even by analyzing live videoconference feeds. Some analyses highlighted which parts of a video were fake and which were deemed real.

This A.I.-generated video of a building collapse, for instance, might fool social media users, but most of the A.I. detectors knew that it wasn’t real.

This video of a model, though, stumped many of the detectors.

It was not clear why some of them failed to spot the A.I. fakery, which was quite lifelike and created by Midjourney, an A.I. image and video generator.

They are better at detecting fake audio.

A.I.-generated audio quickly leaped ahead of images and video to become especially lifelike.

Tools like those from ElevenLabs create remarkably lifelike voices, complete with breaths, pauses and dynamic intonation. Such voices are used for viral videos and memes, but also for telephone scams and impersonations.

Seven of the A.I. detectors and chatbots we tested could check for fake audio, and Sensity and Resemble.ai did the best with it. Even when the audio was heavily altered, the tools managed to conclude with high confidence that the voices or music was A.I.-generated. They also spotted the real voices in our tests.

The clip below was created by ElevenLabs using a script generated by Google’s Gemini chatbot. Most A.I. detectors spotted the digital fake. We had to alter the clip significantly — speeding it up and adding music — before the detectors began to get it wrong.

They did a better job identifying real images.

One risk of A.I. detectors is that they could call something fake when it is real, throwing chaos into developing news events or raising doubts about genuine images. When a gruesome image of a charred body circulated on social media at the start of the conflict between Israel and Hamas, some observers dismissed it as an A.I.-generated fake. Several experts said it was probably real, but by then the doubts had become widespread.

Overall, the detectors did a better job of spotting real images than fake ones. They all knew that this plant, a striped dracaena, was real — a rare instance when all the detectors agreed.

They also performed well at analyzing real videos — like recordings from an iPhone or news clips downloaded from the internet. Though A.I.-generated audio fooled some of the detectors, they all correctly labeled a clip of a reporter reading A.I.-generated text as the real deal.

Real images that were edited with A.I. presented unique challenges.

Some A.I. fakes blend real content with A.I.-generated media to create lifelike fakes that are even harder for the naked eye to detect. The White House, for instance, posted an altered image of a woman who was arrested in Minneapolis last month. Most of the A.I. detectors thought the altered image was real.

We found that most detectors missed alterations in our tests, too. This image, for instance, was edited to include smoke in the background, evoking an explosion. Only a few knew it was edited.

Copyleaks, an A.I. detector, tested a batch of images for us using its upcoming model. It caught the precise alterations made to this photo, highlighting the smoke as A.I.-generated but signaling that the rest of the image was real.

Stuart A. Thompson writes for The Times about online influence, including the people, places and institutions that shape the information we all consume.

The post These Tools Say They Can Spot A.I. Fakes. Do They Really Work? appeared first on New York Times.