A panel of federal judges on Wednesday denied a motion from Anthropic to stop the Department of Defense from labeling it a security risk, a setback for the artificial intelligence company in its battle with the Trump administration over how A.I. should be used in warfare.

In a four-page ruling, the three-judge panel of the U.S. Court of Appeals for the District of Columbia Circuit said Anthropic had “not satisfied the stringent requirements” for a stay of the security risk label.

“In our view, the equitable balance here cuts in favor of the government,” the judges wrote, representing one of two federal courts where Anthropic sued the Pentagon. The other is in California.

The ruling is not a final decision, and the case is set to continue. But it was an early win for the Trump administration, which has been fighting with Anthropic in the aftermath of a failed $200 million contract negotiation over the use of A.I. in classified systems.

The Defense Department and Anthropic had disagreed over contract terms related to how the Pentagon could use the company’s A.I., which led Defense Secretary Pete Hegseth to label the start-up a “supply chain risk.”

That designation is typically applied to foreign companies that pose national security concerns. Being labeled a supply chain risk effectively blacklists a company from doing business with U.S. government entities.

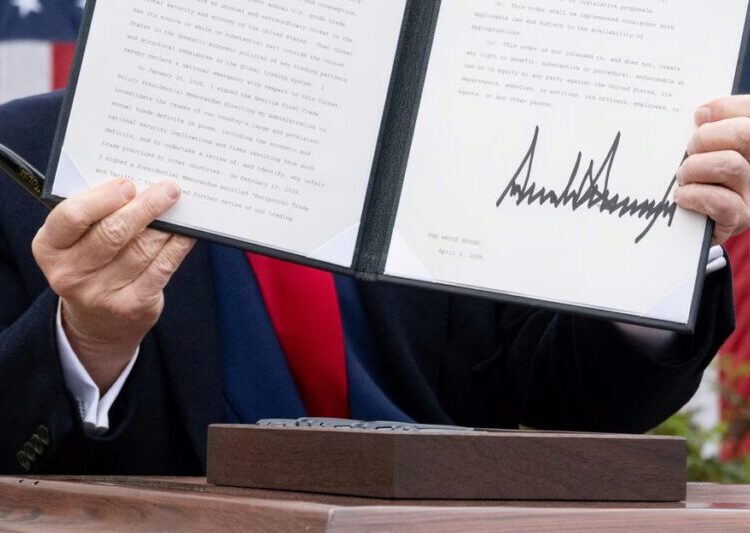

Mr. Hegseth issued orders designating Anthropic such a risk under two different laws. Anthropic then filed two lawsuits — one in the District of Columbia court and the other in U.S. District Court for the Northern District of California — to challenge the label under each of the laws, accusing the Pentagon of using the designation inappropriately to punish the company on ideological grounds.

Last month, the judge in the California court ruled in Anthropic’s favor and said the A.I. company would not be restricted from continuing with its federal contracts with certain defense-related agencies for now.

Wednesday’s ruling from the District of Columbia court continues to bar Anthropic from new contracts with the Pentagon. The Department of Defense has said it will remove Anthropic’s software from its systems over the next six months.

In its ruling, the panel acknowledged that Anthropic would “likely suffer some degree of irreparable harm.”

Todd Blanche, the acting attorney general, wrote on social media that the ruling was “a resounding victory for military readiness.” He added, “Our position has been clear from the start — our military needs full access to Anthropic’s models if its technology is integrated into our sensitive systems.”

“We’re grateful the court recognized these issues need to be resolved quickly,” a spokeswoman for Anthropic said in a statement. “While this case was necessary to protect Anthropic, our customers and our partners, our focus remains on working productively with the government to ensure all Americans benefit from safe, reliable A.I.”

Dario Amodei, the chief executive of Anthropic, has long said A.I. must have limits for safety reasons. During the contract negotiations with the Pentagon, Anthropic wanted limits imposed on its A.I.’s use for domestic surveillance and autonomous weapons, while the Department of Defense argued that no private contractor could tell it how to use technology.

Anthropic, which is based in San Francisco, has argued that the Pentagon’s application of the supply chain risk label not only was a punishment on ideological grounds but also violated its First Amendment rights. Anthropic has said its business was suffering from the designation, and asked the courts for a temporary stay while it argued its cases.

In the California court last month, Judge Rita F. Lin wrote that she agreed that Anthropic was being punished for criticizing the government.

“Nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the U.S. for expressing disagreement with the government,” she wrote.

The different rulings from the courts have created confusion.

“The Pentagon’s actions and the D.C. Circuit’s ruling create substantial business uncertainty at a time when U.S. companies are competing with global counterparts to lead in A.I.,” Matt Schruers, the chief executive of the Computer and Communications Industry Association, said in a statement.

Mike Isaac is The Times’s Silicon Valley correspondent, based in San Francisco. He covers the world’s most consequential tech companies, and how they shape culture both online and offline.

The post Federal Court Denies Anthropic’s Motion to Lift ‘Supply Chain Risk’ Label appeared first on New York Times.