Welcome to Eye on AI, with AI reporter Sharon Goldman. The pro-Iran meme machine trolling Trump with AI Lego cartoons…Amazon’s Andy Jassy defends Amazon’s $200 billion spending spree...OpenAI pauses Stargate U.K. data center, citing energy costs.

It’s been another one of those wild weeks in AI, with Anthropic electing not to release its new Claude Mythos model because of concerns about the cybersecurity risks it poses (and forming a coalition to use a preview version of the model to bolster cybersecurity defenses); Meta releasing its first AI model since hiring Alexandr Wang; and mounting expectations about OpenAI’s upcoming new “Spud” model.

Most of these AI models run on Nvidia GPUs, the sophisticated and expensive AI chips (at over $30,000 a pop) that power their training and output. But across the industry, access to those chips remains a bottleneck. OpenAI president Greg Brockman, for example, has said allocating GPUs at OpenAI is “pain and suffering.”

This week, at the HumanX conference in San Francisco, I discovered that even inside Nvidia, GPUs are scarce.

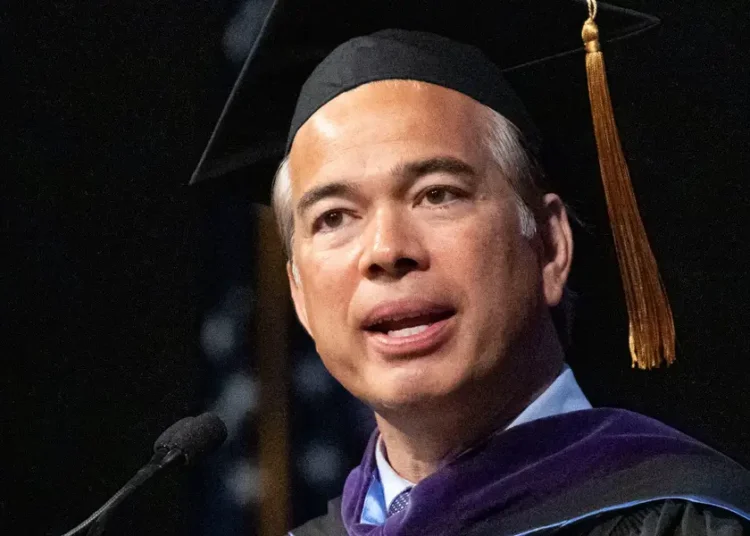

I sat down with Bryan Catanzaro, who leads applied deep learning research at Nvidia, overseeing teams working on AI-driven graphics, speech recognition, and simulation. Catanzaro was also among the first, back in the early-to-mid 2010s, to notice researchers snapping up Nvidia GPUs to train AI models—a signal that helped push CEO Jensen Huang to double down on AI, setting the stage for the company’s now-historic run.

Today, though, even Catanzaro’s teams are struggling to access enough GPUs. “My team uses AI very deeply in our work, and their primary complaint is they want higher limits,” Catanzaro told me. “They want more GPUs.”

“Efficiency is also intelligence”

In fact, he said one of his main jobs now is simply trying to secure more compute for his teams. “We’re all supply constrained,” he said. “Jensen will say, ‘I’m sorry, Bryan, but those are sold.’ We operate within those constraints.”

One of Catanzaro’s projects has been leading the team building Nvidia’s Nemotron, a family of models that are open source—meaning users can freely download them to use, study, or modify. To be clear, Nvidia isn’t trying to compete in the model-building race with the likes of OpenAI and Anthropic. Instead, it’s building them to strengthen a developer ecosystem that remains tied to Nvidia hardware and software.

The Nemotron models are known for being particularly GPU-efficient. And Catanzaro said it’s the very constraints on GPU access at Nvidia itself that is driving the push to make Nemotron models more efficient. “In a supply-constrained world, efficiency is also intelligence,” he said.

No longer a science project

But surprisingly, efficiency isn’t bad for business. Catanzaro said it was Jevons Paradox at work: When something becomes more efficient, demand often surges. “People find all sorts of new ways to use a thing when it gets more efficient,” he said.

Still, he acknowledged that Nemotron’s growing visibility inside Nvidia has also helped unlock more resources. “We’ve been working on [Nemotron] for a long time, but it’s really only in the past six months that it’s gotten more attention. As people inside Nvidia better understand the importance of this work, you get better storytelling, better collaboration, and more support across the company.”

Nvidia has realized, he added, that it can no longer take a hands-off approach to the AI ecosystem. In the past, Nvidia could rely on others to build the models and applications that drove demand for its chips. Now, as AI becomes more competitive and chip-constrained, the company sees a more active role for itself in shaping how that ecosystem develops.

“In the past, some people felt like we could just let the ecosystem take care of itself,” he said. “Now it’s much more obvious that Nvidia has a bigger role to play—a real responsibility and opportunity with Nemotron.”

That framing also helps elevate the Nemotron work inside Nvidia, where teams are competing for scarce GPU resources. “This isn’t a science project,” Catanzaro said. “It’s not just me asking for resources for my team. This is about Nvidia’s future.”

With that, here’s more AI news.

Sharon Goldman [email protected] @sharongoldman

The post Even Nvidia’s own research teams can’t get enough GPUs amid the race for AI computing power appeared first on Fortune.