Joseph V. Sakran is a trauma surgeon and executive vice chair of surgery at Johns Hopkins Hospital. Rahul Gorijavolu is a medical student and researcher at Johns Hopkins University School of Medicine and MIT Critical Data.

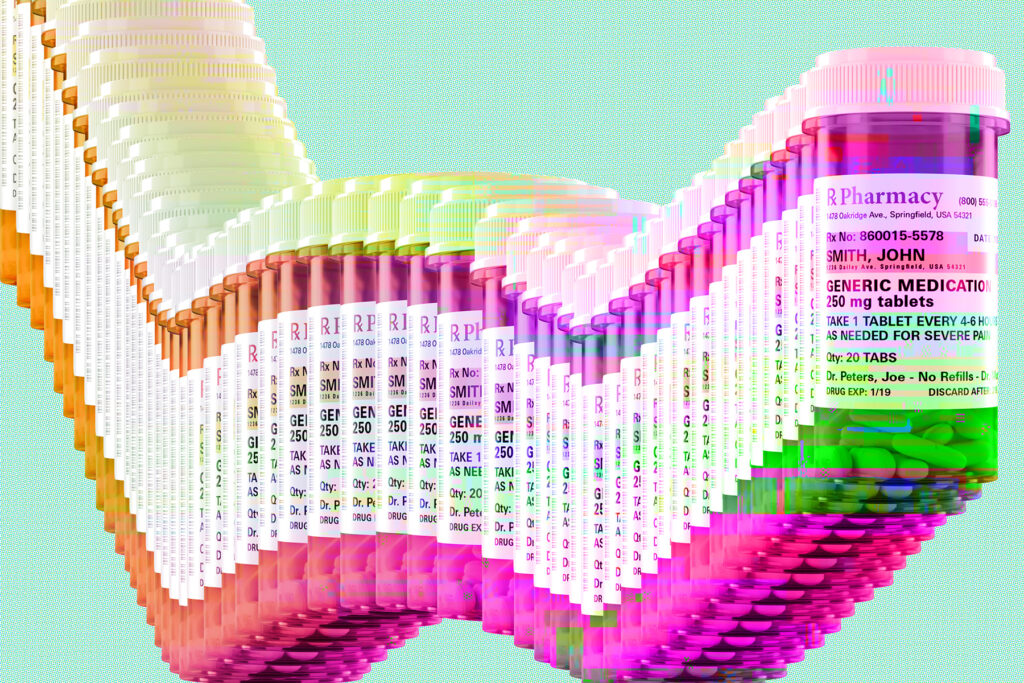

In Utah, an artificial intelligence platform called Doctronic is legally renewing prescription medications for patients without physician involvement. For a $4 fee, it conducts the intake, evaluates the patient and sends the prescription to a pharmacy.

Operating under a state regulatory exemption, this is a live commercial program handling real prescriptions for real patients with plans to expand to a dozen more states this year.

Yet the entire scientific case for its safety rests on a single unvalidated study.

That study is a preprint posted to the online site medRxiv in July. It has not been peer-reviewed. All eight of its authors hold equity in Doctronic. The company’s own internal committee conducted the ethics review. Colleagues requesting the underlying data were denied it. Eight months later, the study still hasn’t been published in a peer-reviewed journal.

That is the evidentiary foundation for the first autonomous AI prescribing program in the United States. And it is cause for serious concern.

We are now in a moment where AI development is outrunning the regulations designed to govern it — raising the risk to patients as a result. In health care, unchecked risk at scale is not a business disruption. It is a public health crisis.

Doctronic’s program operates under Utah’s AI regulatory sandbox, a commendable and innovative — but premature — framework designed to let companies test innovations under reduced oversight. The company’s cornerstone claim is that its AI matches human clinician treatment plans 99.2 percent of the time. State regulators have cited this figure. News outlets have repeated it. It has become the number that justifies the whole operation.

But nearly every report has missed one detail: That figure was derived from urgent care encounters, not from chronic medication renewals, which are the focus of the Utah pilot. These are different clinical tasks. And 99.2 percent accuracy, applied at scale, still means errors affecting thousands of patients.

The question is which patients. There is no way of knowing, from an unreviewed company-authored preprint with unavailable underlying data, whether those errors are randomly distributed or systematically concentrated among the elderly, the immunocompromised, patients with complex medication regimens or patients whose conditions have quietly changed since their last doctor’s visit.

We understand the appeal of this approach. Medication renewals for stable chronic conditions are among the lowest-risk prescribing tasks in medicine. Patients are already on the medication, and the renewal process is often perfunctory. If AI can handle these routine tasks reliably, it could free up doctors for more complex care.

But low-risk is not no-risk. Chronic conditions evolve silently. Blood pressure medications become insufficient as cardiovascular disease progresses; diabetes medications require adjustment as kidney function declines. New medications introduce interaction risks. The routine nature of renewals makes clinical oversight easy to take for granted, but removing it entirely requires rigorous evidence showing that the AI can detect and act appropriately upon these silent changes.

Furthermore, research shows that AI systems perform differently across patient populations, and prescription tools are no exception. A mammography AI approved by the Food and Drug Administration was nearly 50 percent more likely to incorrectly flag normal results in Black women versus White women. We don’t know whether Doctronic has similar blind spots, because the data has not been shared.

Yet even a digital thermometer requires validation data provided to the FDA, demonstrating safety and efficacy through transparent evidence, and surviving regulatory scrutiny before ever reaching patients. This is not a regulatory gap. It is a regulatory void that allows room for harm in the name of innovation.

Safety concerns have been broadly expressed. The American College of Physicians wrote that prescription drugs should not be prescribed without physician involvement. The American Medical Association warned that accuracy claims don’t replace clinical judgment. A Nature commentary warned of a public health catastrophe.

The FDA, meanwhile, says the program falls outside its regulatory purview. On the day the program was publicly announced, the agency published guidance reducing its oversight of AI-enabled health software.

AI is bound to transform medicine. But the evidence bar matters as the foundation that makes innovation trustworthy.

We have been here before. IBM Watson recommended unsafe cancer treatments before data review. The Epic Sepsis Model flagged roughly 1 in 5 patients who did not have sepsis. In both cases, widespread adoption preceded independent scrutiny. In both cases, patients paid the price.

What should responsible AI governance look like? The FDA should expand its regulatory purview to treat autonomous prescribing systems as the medical devices they functionally are. States operating regulatory sandboxes should require independent validation before deployment. Congress should mandate that any AI system making medical decisions must make its training data and validation results publicly available for independent scrutiny. The window to act is this year, before autonomous AI prescribing expands and the question becomes moot.

Affordable, accessible AI prescribing could be genuinely liberating for patients and clinicians alike. It could reduce costs, eliminate barriers to routine care and free physicians to focus on cases that truly require human judgment.

Four dollars to get a prescription is a cheap price. But cutting corners on evidence for AI prescribing is bound to exact a far heavier cost.

The post Should AI prescribe your meds? The evidence is lacking. appeared first on Washington Post.