Standing up to unreasonable and ethically challenging requests from the government ought to be commonplace in a free country. That’s what the artificial intelligence powerhouse Anthropic did last week when it insisted on contract terms barring the Defense Department from using its AI software to conduct mass surveillance on the American public or to drive lethal weapons systems not overseen by people.

Sadly, such defiance of the Trump administration is rare. While Anthropic stood on principle and countered the Defense Department at potentially tremendous cost to its business, the rest of corporate America, particularly Anthropic’s Big Tech competitors, repeatedly respond to bullying with acquiescence.

That’s one reason we all should cheer for Anthropic in its lonely fight for responsible guardrails on the emerging technology of AI. More than merely winning back the company’s lost government business, a victory would help rein in an administration that has made a habit of improperly leveraging its gargantuan power to bend businesses and other institutions to its will.

In normal times, this would be a ho-hum business dispute between a prominent corporation and a headstrong branch of government over the proper use of cutting-edge tech. The government isn’t wrong to suggest that one company can’t dictate how its products should be used, and the Pentagon can certainly stop working with Anthropic, within the confines of its existing contract, if it wants to.

But these are not normal times, and this is no run-of-the-mill emerging technology.

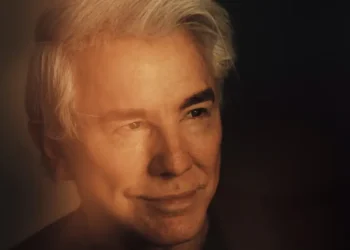

Claude, Anthropic’s signature product, is one of the most popular artificial intelligence tools for businesses, and CEO Dario Amodei has become a leading voice in the industry on the potential perils of AI. He has spoken forthrightly of the job losses that could result, said that the new technology will “test who we are as a species” and stressed the need for regulation.

Make no mistake, Anthropic is interested in being a warfighting tool, despite President Donald Trump calling it a “radical left, woke company” full of “leftwing nut jobs.” It has developed one of the more effective generative AI applications on the market, which is why the Pentagon wanted so badly to continue working with it. Indeed, the five-year-old San Francisco-based company, last valued at $380 billion, has been supplying the Defense Department, including through a partnership with the analytical software company Palantir — which has notably friendly relations with the Trump administration.

Anthropic’s original sin with Trumpworld was its advocacy of AI regulation. Much of Silicon Valley views AI guardrails as potential business handcuffs, a notion repeatedly expressed by the likes of venture capitalist Marc Andreessen, who accused Biden administration officials of trying to “kill” AI, and David Sacks, another Silicon Valley investor, who is Trump’s AI and crypto czar.

Thus, it was probably inevitable that Emil Michael, the Defense Department’s undersecretary for research and engineering, would go on the offensive last week when talks with the company soured. In a post on X, Michael labeled Amodei a “liar” with a “God-complex.”

Asked by Bloomberg to elaborate, Michael said he was concerned Anthropic and other AI companies are “making their own policies that sit on top of democratic policies that are voted on by the people, passed by Congress, [and] signed by the president.”

The irony is rich here. A decade ago, Michael was a top lieutenant to then-CEO Travis Kalanick at Uber — a company that made a name for itself by flouting local taxi regulations. Uber’s habit of asking neither for permission nor forgiveness is the stuff of Silicon Valley legend. That Trump’s “Department of War” conducts extrajudicial killing operations against civilian boats it claims, but doesn’t prove, are narcotics peddlers also doesn’t speak well of its adherence to “democratic policies” or, for that matter, the law.

Anthropic likely has a good legal case against the federal government for violating its right to due process, particularly in reference to Trump and Defense Secretary Pete Hegseth’s designation of the company as a “supply-chain risk,” banning all agencies from doing business with it. The situation echoes off-again, on-again litigation with law firms that charged Trump’s government with unconstitutionally cutting off their business.

OpenAI, Anthropic’s bitter rival whose ChatGPT is a consumer favorite, demonstrated its opportunism by swooping in to take Anthropic’s place with the Pentagon. It won concessions similar to what Anthropic had been seeking after popular backlash against ChatGPT. Anthropic’s Claude, which hasn’t focused on consumers, shot to the top of the list of most downloaded apps in Apple’s App Store over the weekend, amid widespread criticism of OpenAI’s move.

“I am confused about why the Pentagon would accept this language when they just tried to nuke Anthropic for asking for something very similar to this,” observed AI legal researcher Charlie Bullock. The facts, coupled with comments by the president and his minions, would seem to validate Anthropic’s litigation narrative that it had been unfairly targeted.

AI needs rigorous regulation if there is to be hope that its use will be safe. And the country needs more companies with the courage to lose business when the Trump administration wants to dictate terms that could lead to dangerous outcomes.

The post Anthropic takes on Hegseth and Trump appeared first on Washington Post.