Computer literacy. Internet literacy. Social media literacy. Mobile literacy. Virtual reality literacy.

Every few years, the tech industry introduces a new kind of product, then prods schools to teach millions of students how to use it.

The pitch to train schoolchildren on the latest tech has stayed roughly the same since the introduction of personal computers in the late 1970s: improved learning and better career prospects. Since then, campaigns for a host of new tech literacies have come and gone — even as some of the promises failed to materialize.

Now tech giants like Google, Microsoft and OpenAI are urging schools to teach the latest topic: A.I. literacy.

Here’s what to know.

What is A.I. literacy?

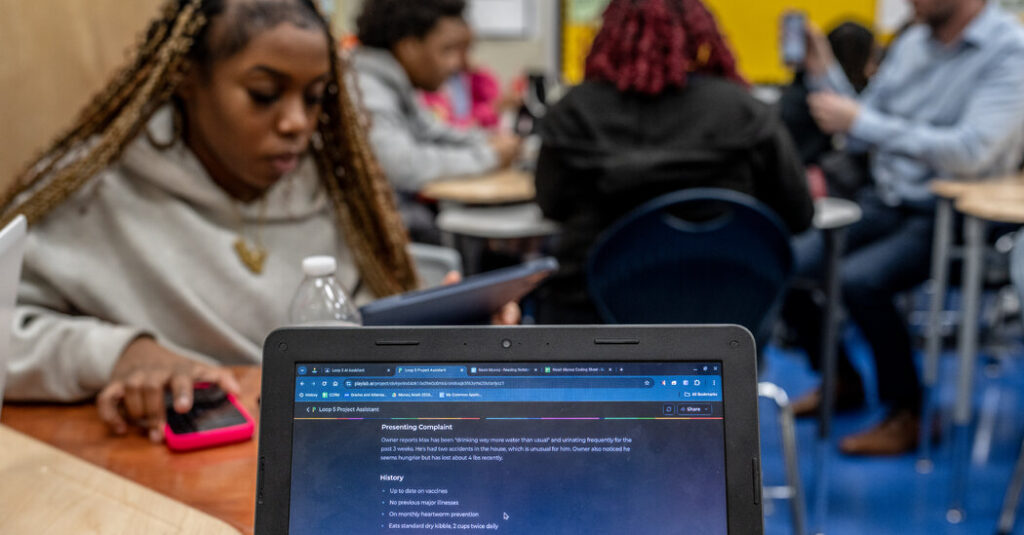

The latest school tech lessons often revolve around chatbots, which can produce human-sounding writing, realistic-looking videos and reams of computer code.

Some schools are teaching students to prompt chatbots like Google’s Gemini and examine the information they produce. Some lessons ask students to study the associated societal risks, like chatbot misinformation or biased results. Some schools are also encouraging students to use A.I. to create their own apps.

The push for A.I. education in schools is not new. In 2016, with A.I.-powered appliances like robotic vacuum cleaners gaining popularity, researchers in Austria proposed that schools teach A.I. concepts to students starting in kindergarten.

A few years later, a group of academics in the United States came up with an A.I. education framework for schools to teach students technical concepts and A.I. ethics.

Now, some schools are racing to teach students to assess and use chatbots, the latest popular form of A.I.

What’s behind the A.I. push in schools?

Tech boosters say it’s urgent for schools to train students on chatbots to help with learning, prepare young people for the job demands of an “A.I.-driven future” and help boost U.S. economic growth.

Last April, President Trump issued an executive order on “Advancing Artificial Intelligence Education for American Youth.” It called on schools to “integrate the fundamentals of A.I. into all subject areas,” starting in kindergarten.

In July, Microsoft announced a $4 billion cash and technology effort to spur educational institutions to teach “A.I. skilling.” In September, Google promised $1 billion in A.I. training, including providing the company’s Gemini tools to “every high school in America.”

This month, the U.S. Department of Labor issued A.I. education guidelines to ensure “all American workers are able to share in the prosperity that A.I. will create for our economy.” Among the foundational skills for students, the agency said: learning how to create clear chatbot prompts and check the answers for accuracy.

Which other groups are shaping A.I. education?

Some researchers and nonprofit groups are backing broader education efforts covering an array of related societal issues. Among them, the Kapor Center, a nonprofit in Oakland, Calif., recommends that students assess A.I. safety risks and examine tech industry power dynamics, including the companies profiting most from A.I.

These experts are urging schools to help students gain a technical understanding of A.I. as well as skills to investigate the consequences of the latest technologies.

The Computer Science Teachers Association, a nonprofit with nearly 10,000 teachers as members, recently revised its national learning standards to include a new proposed student skill: identifying how products like A.I. prioritize different values, such as promoting user engagement over accuracy or human civility.

“Students need to be able to make informed decisions about when and how A.I. tools are used — both in their own work and also when A.I. is used on them, as citizens who are entering a world where more and more decisions about their lives will be made by machines,” said Jake Baskin, the executive director of the Computer Science Teachers Association.

What have we learned from past tech literacy campaigns?

Take social media literacy. The programs have helped some young people more easily identify certain online hazards. But tech literacy efforts are generally not designed to curb underlying risks like predators and sextortion, problems that regulators and lawmakers have long pressed tech companies to address.

In 2017, Google introduced a free digital citizenship curriculum called “Be Internet Awesome.” The gamelike lessons, featuring colorful virtual worlds like “Reality River,” asked students to distinguish between facts and misinformation or to make choices about how they shared their personal information online.

The intent was to help students become more alert, safer and kinder online. More than 100 million children have since tried the lessons, according to a Google blog post in 2024.

But a study of the digital citizenship game, involving more than 1,000 fourth to sixth graders, found mixed results.

Students who were randomly assigned to the Google lessons showed better knowledge of concepts like “catfishing” and approaches to handling online problems like mean behavior, according to the study, which was published in 2023 in Contemporary School Psychology, a peer-reviewed journal.

The Google lessons, however, did not reduce mean online behaviors like cyberbullying or improve children’s online kindness or their data privacy practices, the study said.

Google did not respond to a request for comment.

Natasha Singer is a reporter for The Times who writes about how tech companies, digital devices and apps are reshaping childhood, education and job opportunities.

The post ‘A.I. Literacy’ Is Trending in Schools. Here’s Why. appeared first on New York Times.